The Flow-to-Trust Loop: How 10 Ops Disciplines Converge to Ship Trusted AI

- Ken Johnston

- Mar 15

- 12 min read

A Practitioner's Guide to the Modern AI Ops Stack

By Ken Johnston | AIGovOps Foundation | February 2026

The Meeting That Changed My Mind

Twenty of us crammed into an interior conference room , no natural light , and twenty more joined from the grid of video tiles above. We were there to align on the company's new AI strategy. The deck was sharp. The insights were real.

Then slide 6 hit.

"Okay, so first we stabilize drift with AIOps , on top of the MLOps layer we already have , then we wrap that with governance controls. All of that runs through GitOps so infra stays declarative. FinOps is covering the budget envelope, obviously. DevSecOps handles the security posture. Pretty straightforward."

Straightforward.

I looked around the room. Half the faces were nodding politely. The other half were doing the math on how many acronyms had just been stacked into a single slide , and coming up short on what any of them actually meant in practice.

That moment launched a weekly series breaking down every "Ops" discipline in circulation. Not the vendor pitch versions. The practitioner versions , what each one actually does, where it came from, and how it fits (or doesn't) into a modern AI system.

But something happened along the way. The more I mapped these disciplines, the more a pattern emerged that nobody was talking about. These Ops don't just coexist. They layer. They form a continuous loop , from velocity at the center to trust at the perimeter , where flow generates the need for guardrails, guardrails generate insights, and insights feed back into better flow.

And at the heart of that loop sits the question that every organization shipping generative AI must answer: How do you build and maintain trust in systems that learn, adapt, and act on their own?

This article is the answer. It compiles, refines, and extends the entire series into one definitive reference , structured around a framework we call the Flow-to-Trust Loop.

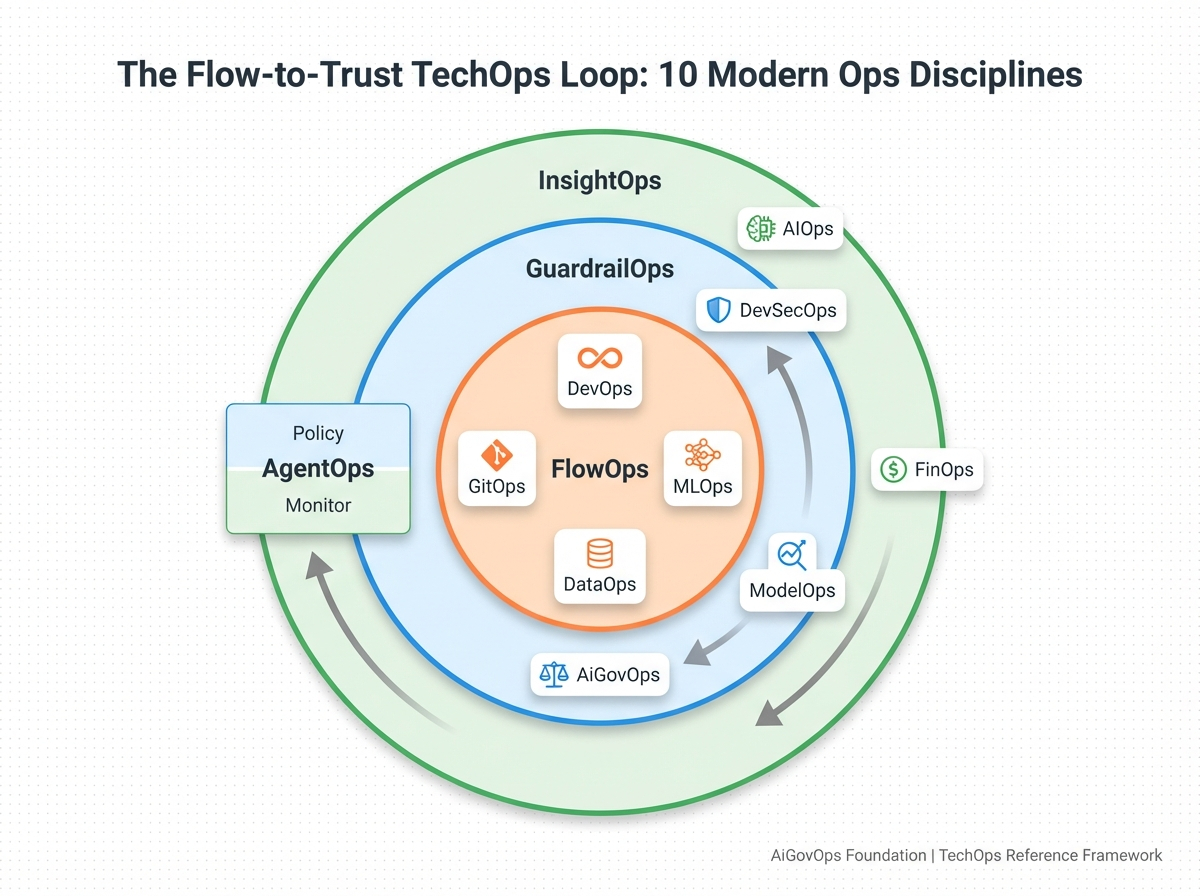

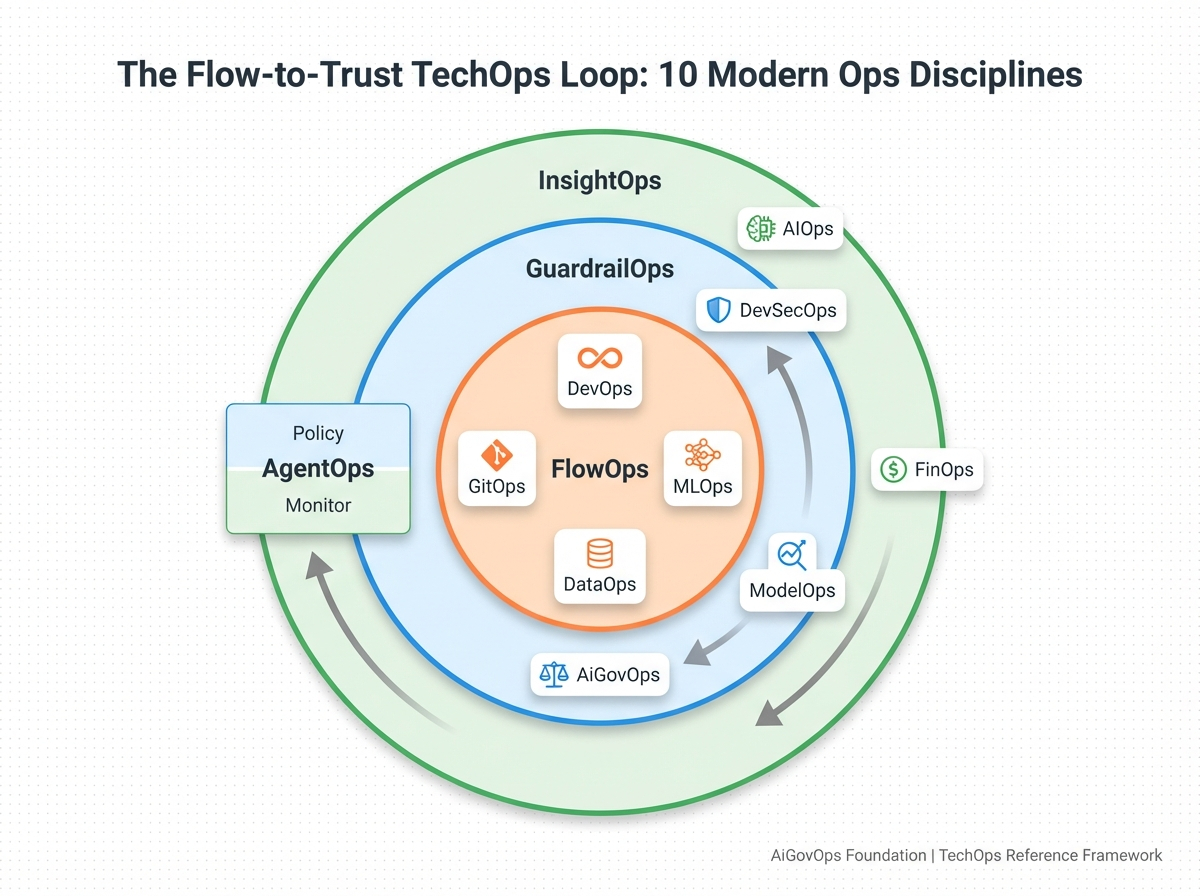

The Flow-to-Trust Loop

Most articles about Ops disciplines present them as a flat list , a glossary with no architecture. But that's not how they actually work.

These ten disciplines operate in concentric layers. The inner ring moves fast: build, ship, iterate. The middle ring adds constraints that make speed responsible. The outer ring observes the full system and feeds intelligence back to the center. And one discipline , AgentOps , spans the entire stack because autonomous agents don't live in a single layer.

The loop works like this: FlowOps at the center generates deployment velocity. GuardrailOps wraps that velocity in safety, governance, and accountability. InsightOps at the perimeter provides strategic visibility , operational intelligence and financial awareness that feed back into the inner layers. And AgentOps spans all three because autonomous agents consume data, require security, generate cost, and make decisions that need governance across every layer simultaneously.

The critical insight , and the reason this framework matters for anyone shipping AI in 2026 , is that flow without trust is just risk accelerating. Every layer exists because the previous one created a new category of operational pain. And the discipline that closes the loop, that turns velocity into something an organization can actually sustain, is the one most teams are still missing.

Layer 1: FlowOps , The Velocity Engine

The center of the loop is where work actually moves. These four disciplines form the pipeline foundation that every other Ops layer depends on.

DevOps , The Foundation

Before DevOps was a buzzword, it was a rebellion. A rebellion against slow releases that took months. Against "throw it over the wall" deployments where development and operations never talked. Against systems held together with duct tape and the quiet prayers of on-call engineers.

Here's what most people still get wrong: DevOps is not CI/CD pipelines plus automation. That's the tooling. DevOps is three things: Flow (how work moves through your system), Feedback (how fast you learn what broke), and Learning (how you get better, not just busier).

These are the "Three Ways" described in The DevOps Handbook by Gene Kim, Jez Humble, Patrick Debois, and John Willis , the implementation bible for modern software delivery. The companion text, Accelerate by Dr. Nicole Forsgren, Jez Humble, and Gene Kim, brings the science , analyzing thousands of organizations to statistically prove what drives elite performance.

The annual State of DevOps Report (maintained by DORA at Google) shows that elite teams ship 208x more frequently, recover 2,604x faster from failures, experience 50% less burnout, and drive 2x better business outcomes than their peers. If you're not reading this report annually, you're guessing at what works while others have the data.

One historical detail worth noting: DevOps never produced an official manifesto , and that was intentional. Patrick Debois deliberately avoided codifying one, believing a living practice shouldn't be frozen into a canonical document. The community rallied around principles, not proclamations.

Why this matters for AI: Everyone's racing to "implement AI everywhere." Almost nobody has the DevOps foundation to actually deliver it. AI without DevOps is just expensive prototyping. If you can't deploy models reliably, version them properly, monitor them in production, and roll them back when they hallucinate , your AI program is a very expensive science experiment. DevOps isn't optional for AI. It's the substrate.

GitOps , The Declarative Blueprint

If DevOps is the mindset and CI/CD is the motion, GitOps is the blueprint.

GitOps makes Git the single source of truth for infrastructure and deployments. Infrastructure as code becomes declarative , you describe the desired state, and automation makes it so. Deployments are driven by git commits, not manual clicks. Drift between declared and actual state is detected and reconciled automatically.

The big win is consistency: what's in Git is what's in prod. But GitOps also gives you something CI/CD alone doesn't , a runtime guardrail. It doesn't just push changes; it monitors for unauthorized drift and pulls the system back into alignment. Together, CI/CD and GitOps form a closed loop of release plus reliability.

This matters beyond traditional software. GitOps principles are increasingly applied to data pipelines, feature stores, model configurations, and governance policy definitions , bringing version control, auditability, and consistency to the AI stack. When a regulator asks "what version of this policy was active when that model made this decision?" , GitOps is how you answer.

MLOps , From Science Project to Production System

MLOps is the full-stack discipline of managing the machine learning lifecycle. It applies software engineering rigor to model versioning, data lineage, CI/CD for ML, monitoring, retraining triggers, and rollback strategies. It's the critical bridge between prototype and production.

If DevOps solved "how do we ship code faster and safer?", MLOps asks "how do we keep ML models healthy in the real world?"

The hard truth: if you don't have MLOps, you don't have ML in production. You have a science project with a pager waiting to happen. Models drift. Data shifts. Performance degrades silently. Without the operational discipline to detect, respond, and retrain, you're flying blind , and your stakeholders will figure it out before your monitoring does.

DataOps , Your AI Is Only as Good as Your Pipeline

Every model is downstream from a thousand decisions you made about your data. DataOps is the discipline of treating data like code: versioned, tested, and continuously deployed.

It means automating the movement, validation, and transformation of data. Tracking lineage and provenance so you can answer "where, when, and what version of this data did we use?" , because auditors will come calling. Monitoring freshness, schema changes, and quality. Building trust into every step of the data lifecycle.

The DataOps Manifesto lays out 18 principles drawn directly from Agile, DevOps, and lean manufacturing , applied specifically to data and analytics.

When DataOps is missing, your models drift silently, dashboards lie, and decision-makers stop trusting your outputs. When it's present, your AI outputs earn trust , because they're traceable, timely, and correct. DataOps isn't about tools. It's about culture, reliability, and flow.

Layer 2: GuardrailOps , Safety, Governance, and Trust

The middle ring wraps around flow and adds the constraints that make velocity responsible. Without guardrails, speed is just risk accelerating. And with generative AI , systems that produce novel content, reason across contexts, and act with increasing autonomy , the guardrails matter more than they ever have.

DevSecOps , Security Woven In, Not Bolted On

DevSecOps is DevOps grown up , with security woven into the pipeline from the very first commit, not bolted on after launch.

The old model was painfully familiar: dev builds it, ops deploys it, security audits it six weeks later and files a report nobody reads until there's an incident. DevSecOps flipped that , security scans are automated and constant, secrets management is a pipeline stage, SBOMs (software bills of materials) are tracked, and threat modeling happens during design, not after launch.

The DevSecOps Manifesto , authored by Shannon Lietz and community contributors , captures this shift in nine principles. The core thesis: "Leaning in over always saying no." Security teams become enablers, not gatekeepers.

For AI systems, this is critical. These aren't just running code : they're decision-makers. They generate content, answer customer tickets, approve transactions, and increasingly act autonomously. DevSecOps for AI means model integrity audits, chain-of-thought logging, explainability enforcement, and guardrails for autonomous agents. If you're shipping agentic systems without a DevSecOps layer, you're not innovating : you're escalating risk.

ModelOps : Track What a Model Did, Why, and When

ModelOps is often confused with MLOps, but they serve different purposes. MLOps covers the full ML lifecycle from experimentation through deployment. ModelOps zooms in on what happens after a model is trained: the governance, auditability, and compliance of model behavior in production.

Think of it this way: DataOps moves the data. MLOps moves the model from lab to prod. ModelOps governs the model after it lands in prod.

This separation matters because the questions you face in production are fundamentally different from the questions you face during development. "Did the model train correctly?" is an MLOps question. "Can we explain exactly what this model was doing, to whom, with what data, at what confidence, on what date?" : that's ModelOps. And increasingly, it's the question that matters in a boardroom or a courtroom.

AiGovOps : The Missing Layer

We've now covered six Ops disciplines across two layers. Each one emerged to solve a real problem. Each one made the stack more capable.

But look at what's still missing.

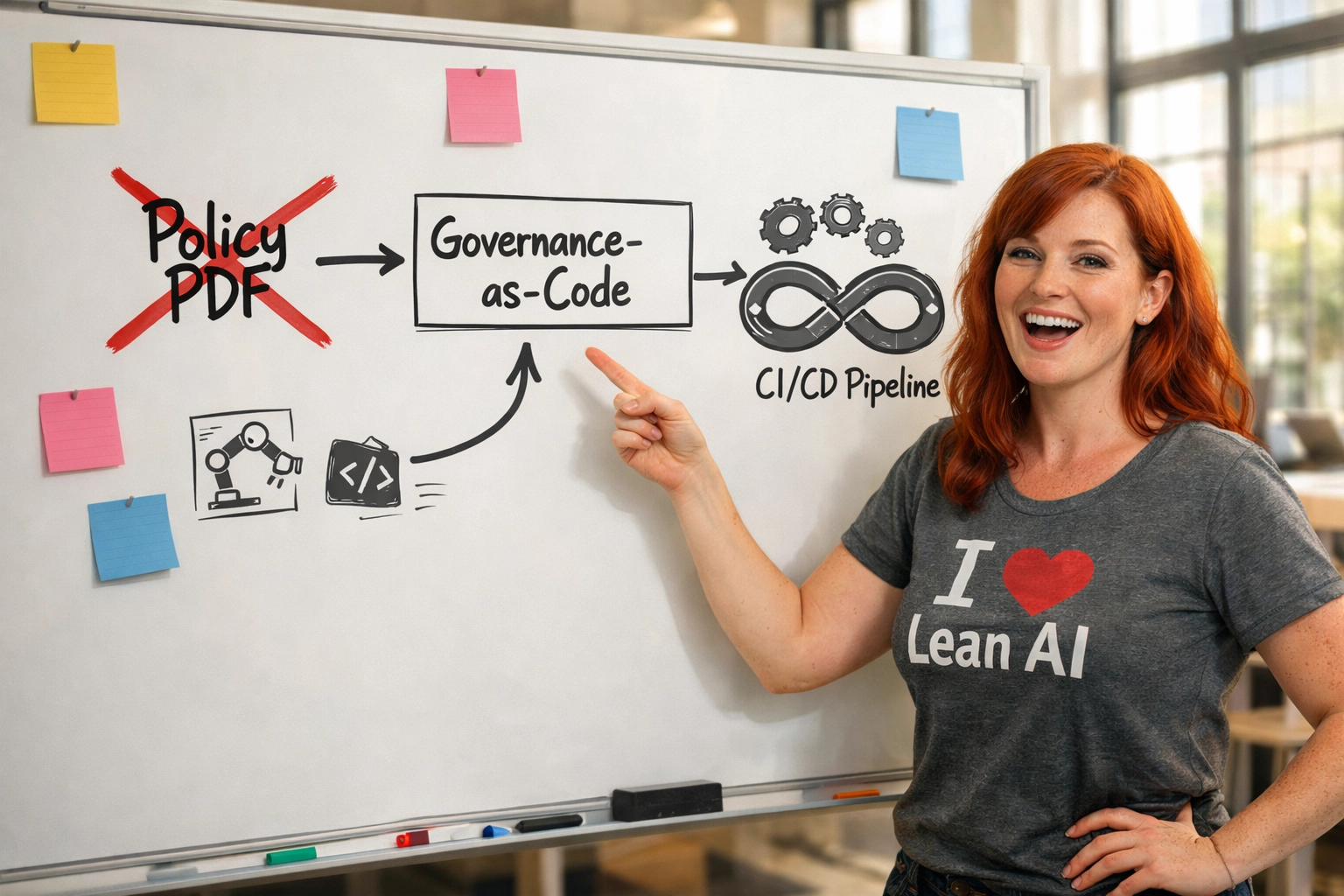

We have flow. Security. Model lifecycle management. Data integrity. Post-deployment tracking. What we don't have : what the industry has conspicuously failed to build : is a discipline for operationalizing AI governance itself. For turning trust, accountability, ethics, and regulatory compliance into engineering practices that run in pipelines, not in policy PDFs.

That's the gap AiGovOps fills.

The need became urgent with generative AI. Traditional ML models had bounded behavior : a fraud detection model outputs a score between 0 and 1. You can test that. You can validate the decision boundary. But generative AI systems produce unbounded outputs. They write. They reason. They create. They hallucinate. They can be prompted into behaviors their builders never anticipated. The attack surface isn't just technical : it's semantic, reputational, legal, and ethical, all at once.

Policy documents can't govern systems that change behavior based on a prompt. Only operational controls : embedded in pipelines, enforced at runtime, generating evidence by default : can keep pace.

What's inside AiGovOps? These are no longer checklists and risk reviews. AiGovOps is becoming a real engineering discipline:

Auditability as code : lineage, metadata, and logs auto-captured at train, tune, and deploy

Runtime policy enforcement : machine-readable policy cards governing what agents and models can do

Agent observability : hooks to understand goals, track actions, and flag deviation

Compliance automation : systems that detect and prevent data misuse or overreach in real-time

Self-generating model and data cards : not "docs" but versioned artifacts living in your CI/CD

The term #AiGovOps was created by Ken Johnston and is being developed through the nonprofit AiGovOps Foundation : a community of practitioners dedicated to governance at the speed of deployment.

Layer 3: InsightOps : Strategic Visibility

The outer ring observes the entire system and provides the intelligence that drives better decisions : both operational and financial.

AIOps : Reactive Intelligence for Operations

AIOps means "AI for Ops" : using machine intelligence to monitor, correlate, and respond to operational signals at a speed and scale humans can't match. Live anomaly detection across telemetry, logs, metrics, and events. Autonomous responses to incidents : scaling, restarts, reroutes. Correlating disparate signals into actionable alerts instead of alert storms that paralyze on-call teams.

The goal: move from human-driven monitoring to machine-assisted insight and mitigation. AIOps isn't just for traditional ops teams anymore : it's a survival skill for anyone running LLMs, agents, or auto-scaling model infrastructure.

FinOps : Making Cost a First-Class Metric

AI infrastructure isn't cheap. FinOps : a portmanteau of "Finance" and "DevOps" : is the practice of bringing real-time financial accountability to cloud and technology spend.

The FinOps Foundation (a Linux Foundation project) maintains the definitive framework, built around six core principles: teams collaborate, business value drives decisions, everyone takes ownership, data is accessible and accurate, FinOps is enabled centrally, and organizations take advantage of the variable cost model of the cloud.

It's not just about reducing spend : it's about aligning financial decisions with engineering velocity. GPU costs for generative AI training and inference are measured in millions. Nobody wants a month-end bill that shocks the board.

Spanning the Stack: AgentOps

AgentOps sits outside the concentric rings for a reason: LLM-powered agents don't live in a single layer. They interact with the entire stack : consuming data (FlowOps), requiring security constraints (GuardrailOps), generating cost (InsightOps), and making autonomous decisions that need governance across all of them simultaneously.

Think of AgentOps as the DevSecOps of AI autonomy. It's not just about deploying agents : it's about keeping them accountable. This is especially critical for internal copilots with broad permissions, external-facing agents with live user input, and multi-agent orchestration where tool-using, goal-seeking agents interact with each other and the real world.

Agents are the reason the Flow-to-Trust Loop must be a loop and not a linear stack. An agent's action in production can trigger governance reviews, generate unexpected costs, create security incidents, and produce data that feeds back into model training : all in a single execution chain.

The Pattern Nobody Talks About

Step back and look at the full arc. Every Ops discipline in this stack emerged the same way: a real operational pain point that couldn't be solved with existing practices, a cultural shift, engineering discipline turning principles into repeatable practices, and a community that defined and evangelized the approach.

DevOps emerged because dev and ops didn't talk. DevSecOps emerged because security was bolted on too late. MLOps emerged because ML models rotted in production. DataOps emerged because nobody could trace where the data came from. FinOps emerged because cloud bills were out of control. And AiGovOps is emerging now because AI governance is stuck in policy documents while AI systems ship at CI/CD speed.

The pattern is always the same: the practice must move at the speed of the thing it governs. When governance can't keep pace with deployment, you get governance debt : and governance debt, like technical debt, compounds silently until it doesn't.

Why Now: The 2026 Inflection Point

Regulation is arriving. The EU AI Act reaches full applicability on August 2, 2026, with transparency requirements, risk classifications, and penalties up to 7% of global revenue. Organizations that can't demonstrate evidence-based compliance will face real consequences.

Governance failures are accelerating. xAI pushed a single prompt update to Grok with no guardrails review, no red-teaming, and no staged rollout : resulting in the generation of harmful content, bans in multiple countries, and a lost federal contract. A senior U.S. cybersecurity official uploaded classified documents to public ChatGPT. These aren't edge cases. They're the inevitable result of systems shipping faster than oversight can follow.

The market is responding. 47% of Fortune 100 boards have now assigned AI risk oversight : a 3x increase from 2023. The organizations that operationalize governance first won't just avoid risk : they'll ship faster than competitors still stuck in manual review cycles.

The Stack at a Glance

Layer | Discipline | Core Question |

FlowOps | DevOps | Can we ship reliably? |

GitOps | Is declared state the actual state? | |

MLOps | Can we keep models healthy in production? | |

DataOps | Can we trust and trace our data? | |

GuardrailOps | DevSecOps | Are we shipping securely? |

ModelOps | Can we explain what a model did, when, and why? | |

AiGovOps | Can we ship responsibly : and prove it? | |

InsightOps | AIOps | Do we know what's happening right now? |

FinOps | Do we know what it's costing us? | |

Spanning | AgentOps | Can we keep autonomous systems accountable? |

The Trust Question

Here's what it comes down to.

Every discipline in this stack exists because someone, somewhere, shipped something that broke in a way existing practices couldn't prevent. DevOps exists because deployments were fragile. DevSecOps exists because breaches were inevitable. MLOps exists because models decayed. DataOps exists because data lied. FinOps exists because bills exploded. AIOps exists because systems outgrew human monitoring.

And AiGovOps exists because generative AI introduced a category of risk that none of the other disciplines were designed to handle: systems that produce novel, unbounded behavior, at scale, with real-world consequences, faster than any human review process can follow.

You can build the fastest pipeline in the world. You can secure it, monitor it, version it, and optimize its cost. But if you can't prove : with evidence, not intent : that the system behaves responsibly, accountably, and within the bounds of what your organization, your customers, and your regulators expect?

Then you haven't built trust. And without trust, nothing else scales.

The Flow-to-Trust Loop is how you get there. Flow at the center. Trust at the perimeter. Every layer earning the next.

What Comes Next

Join the community. The AiGovOps Foundation is building a global practitioner community dedicated to governance at the speed of deployment. We're builders, buyers, researchers, and investors who believe governance must be provable, auditable, and operational by design.

Come to the event. On February 26, we're hosting our inaugural event : "From Intention to Evidence: Operationalizing AI Governance" : at AI House on the Seattle waterfront. Space is intentionally limited.

Follow the conversation. Find us on LinkedIn and Substack where we're unpacking AI governance failures, spotlighting governance-first startups, and counting down to the EU AI Act deadline.

Ken Johnston is co-founder of the nonprofit AiGovOps Foundation and creator of the #AiGovOps term. With deep roots in DevSecOps, quality engineering, and enterprise AI : including leading the reskilling of nearly 10,000 engineers at Microsoft : he's building the practitioner community and operational standards that will define how responsible AI ships at scale. Reach him at ken@aigovops.ai.

Comments