Agentic Hell: Why We're Repeating the Worst Mistakes in AI

- Ken Johnston

- Mar 15

- 6 min read

"Those who cannot remember the past are condemned to build recursive agent loops with no observability." , probably not George Santayana

Every decade or so, we rediscover a new flavor of the same old problem. In the '90s, it was DLL Hell, version mismatches, overwritten binaries, and shared libraries that made shipping anything feel like defusing a bomb. In the 2010s, it was Microservice Hell, over-split systems, copy-pasted services, and a dizzying mesh of internal APIs that made debugging feel like spelunking through spaghetti.

Now, in the golden glow of the AI boom, we're staring down the next iteration of the same architectural chaos:

👹 Agentic Hell.

And yes, it's coming fast.

What's Agentic Hell?

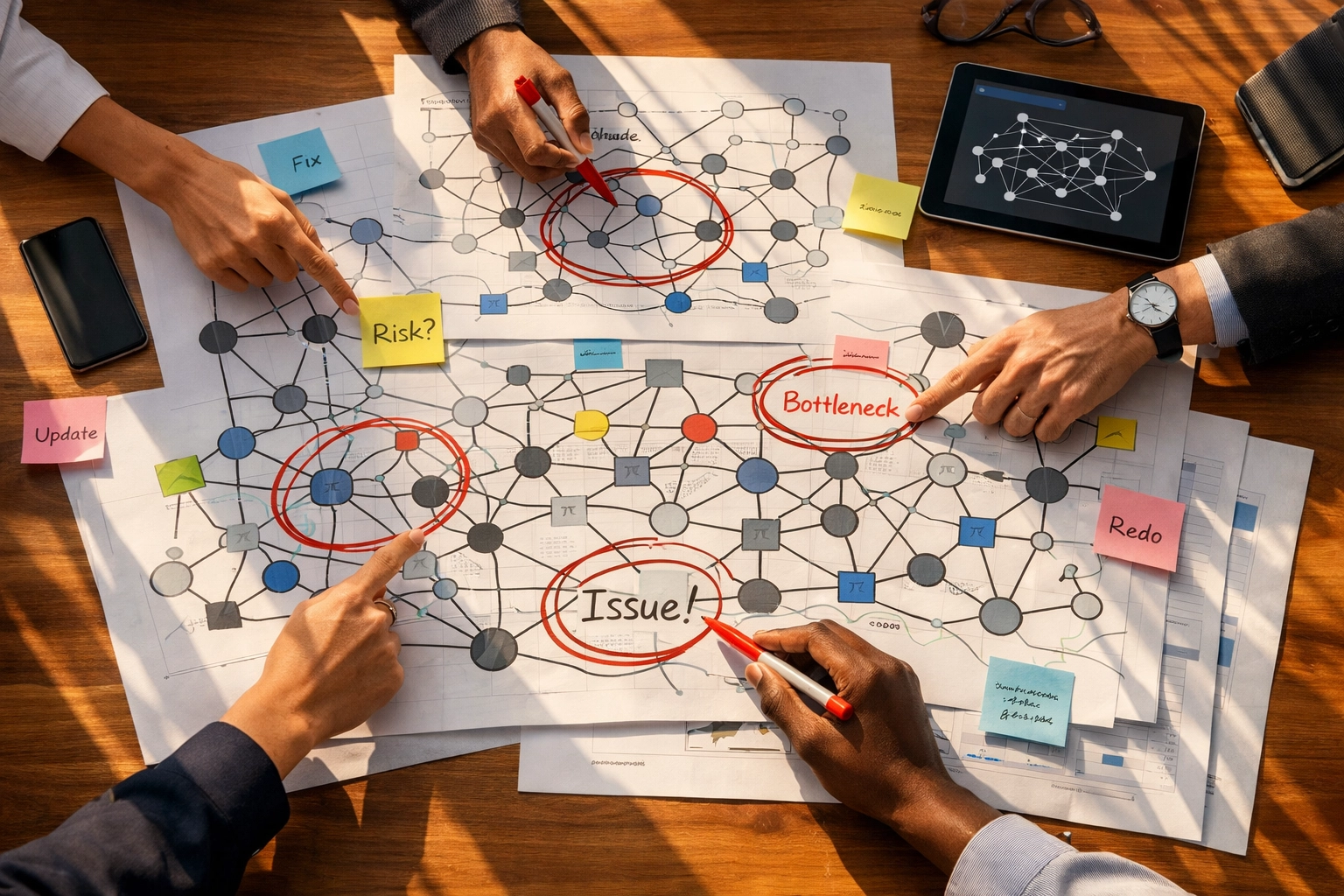

Agentic Hell is what happens when you build "autonomous" LLM agents without versioning, without constraints, and without shared contracts, just vibes and recursive API calls.

In today's race to chain, orchestrate, and embed agents into every layer of the stack, we're seeing:

Agents calling other agents with no clear control boundaries

Conflicting memory systems, inconsistent tool definitions

Frameworks replicating functionality with zero differentiation (BabyAGI vs AutoGPT vs CrewAI vs LangGraph vs your weekend hack project)

And worst of all, emergent, unpredictable behavior that's impossible to trace, log, or test

In other words: a distributed system with all the baggage of microservices, none of the maturity, and a neural net on top.

This Is Dependency Hell in Disguise

Let's be clear: Agentic Hell isn't new. It's just Dependency Hell with a better website.

Whether it was:

DLLs clobbering each other on shared Windows installs

Java classloaders choking on mismatched JARs

Microservices spinning up clones to avoid cross-team coordination

Or now, agents duplicating functionality with no ownership or accountability

…it's all the same underlying pattern:

Unmanaged complexity + missing contracts = entropy.

🏄 #BigDataSurfers Key Point The dependency graph doesn't lie, but we keep ignoring it. Every "Hell" in software history has been rooted in the same architectural sin: building systems where you can't answer "What depends on what, and why?" Whether it's DLLs, microservices, or AI agents, when your dependency graph becomes an unknowable tangle, you're not building intelligent systems. You're building unintelligible ones. The graph always wins.

The Common Anti-Patterns

Here's what every "Hell" has in common:

Name | Symptoms |

Version Drift | Updates that silently break functionality across systems |

Clone & Diverge | Code copied instead of shared, often becoming out of sync |

Ghost APIs | Interfaces no longer maintained or consistent |

Opaque Graphs | You can't tell what called what, or why |

No Ownership | Everyone builds the same thing, differently, in isolation |

Agentic Hell adds one more twist: non-determinism.

It's one thing when a microservice misbehaves. It's another when an autonomous agent rewrites your workflow... or decides not to.

📋 Field Note: When the Agent Loop Ate Production

Company: Mid-sized fintech implementing "intelligent expense review"

The Setup: A well-intentioned team built an agent to classify expenses, trigger approvals, and summarize monthly spend. The agent could call a "lookup policy" tool, a "fetch transaction history" tool, and a "send notification" tool. Simple enough, right?

What Happened: On a Friday afternoon, an edge case expense came through: a $47.82 charge labeled "Office supplies / Software subscription / Team lunch?" The agent couldn't confidently classify it, so it called the policy lookup tool. The policy tool (also agent-powered) responded with: "Insufficient context, please provide transaction history." So the main agent called the transaction history tool. That tool, trying to be helpful, triggered the notification agent to ask the employee for clarification.

The notification agent sent an email. The employee didn't respond immediately (it was 4:47 PM on a Friday). The main agent, configured to "retry on uncertainty," called the policy tool again 10 minutes later. The cycle repeated. Seventeen times. Each loop generated new logs, new notifications, new classification attempts, all slightly different because of the LLM's non-deterministic responses.

By Monday morning, the employee had 34 unread emails, the compliance team had flagged the expense for "suspicious activity," and the engineering team spent six hours tracing through logs trying to figure out why the agent kept calling itself.

The Root Cause: No circuit breakers. No explicit termination conditions. No observability into the agent's reasoning chain. Just vibes and a recursive loop with no exit strategy.

This is AI risk management in reverse, building systems that create risk instead of mitigating it.

🔍 Zoey Pressure Test: Show Me the Receipts Zoey Quinn, AI Governance Practitioner "Okay, so your agent demo was cool. But can you show me: No? Then you don't have an agent. You have a liability dressed up as innovation. Vibe-based orchestration is not a governance strategy: it's confidence theater. If you can't instrument it, version it, and test it, you're not ready for production. Full stop."

Why This Matters for AI Governance

The rise of agent frameworks promises autonomy, intelligence, and abstraction. But without architectural discipline: without shared tool interfaces, traceability, and testable logic: we're not building intelligent systems.

We're building unintelligible ones.

And the cost is already visible:

Engineers debugging behavior that changes on every run

Product managers unable to explain why the AI did what it did

Teams reinventing tools instead of consolidating shared patterns

Compliance teams unable to audit decisions because there's no deterministic record

This isn't just a technical problem. It's a governance problem. When your AI agents operate in an opaque dependency graph with no versioning, no ownership, and no observability, you've created a compliance nightmare. AI governance requires traceability. It demands accountability. It needs governance as code: not vibes and crossed fingers.

Research confirms that 92.5% of production agents deliver output to humans, not to other systems: yet most teams still design for pure autonomy instead of building human oversight into the architecture from day one. The proven effective approach? Confidence thresholds that escalate to humans, preview-and-confirm workflows, and explicit intervention points.

How We Avoid It

If you're building agentic systems: pause and ask:

❓ Are your tools and planners versioned?

If you can't roll back an agent's behavior to last Tuesday's state, you don't have a system. You have a magic 8-ball.

🔍 Can you trace an agent's decisions across tools, memory, and calls?

Every tool invocation, every reasoning step, every confidence score should be logged and auditable. If you can't reconstruct why the agent made a decision, you can't debug it: or defend it.

🧱 Are your agents built on reusable interfaces or bespoke glue?

Shared contracts reduce duplication and increase maintainability. If every team is building their own "send email" tool, you're in Clone & Diverge territory.

🤝 Are teams coordinating around shared capabilities: or duplicating them?

This is where governance as code becomes essential. Centralized tool registries, shared testing frameworks, and coordinated agent operations prevent entropy.

Agentic systems can be powerful: but only if they're composable, observable, and maintainable. That means applying the same lessons we learned from DevOps and MLOps:

Version everything. Prompt templates, tool definitions, agent configurations: all of it.

Test frequently. Build deterministic test suites. Use confidence thresholds. Implement circuit breakers.

Own your interfaces. Define clear contracts between agents and tools. Deprecate gracefully.

Respect the dependency graph. Know what calls what, and why. Visualize it. Audit it.

The emerging discipline of agent operations isn't about waiting for better models: it's about learning which architectures actually survive production contact.

📬 Aoife's Post-Script (APS)

Aoife O'Brien, Systems Anthropologist

Here's the thing about every "Hell" we've built: we always thought this time would be different. This time, the abstraction would hold. This time, the complexity would be manageable. This time, we'd get it right.

But the pattern is clear. We keep building systems we can't understand, debug, or control: and calling it progress.

Agentic systems aren't inherently bad. But they demand a level of operational maturity most teams haven't built yet. They require us to ask harder questions: Does this problem need an agent? Can we instrument it? Can we explain it?

The good news? We've solved these problems before. Version control. Testing frameworks. Observability pipelines. Shared contracts. We know how to build resilient, maintainable systems. We just need to apply those lessons to AI: before Agentic Hell becomes the next decade's cautionary tale.

The time to act is now. Not when your recursive loop eats production. Not when your compliance audit fails because you can't trace agent decisions. Not when your team spends six hours debugging non-deterministic behavior.

Now.

Let's build agents we can actually govern. Join us.

( APS)

Comments